Sometimes geotiffs are too large to process. For example, to transcribe text on a map, as the previous post describes, Azure Cognitive Services has a size limit of 4mb for the free tier and 50 mb for the paid. Also, for deep learning related tasks, I have found it easier to divide large geotiffs into smaller, uniform size images for training and object detection.

These are notes on the tiling technique I’m using to work with geotiffs the CRANE project is using to study the ancient near east.

Before going further I wanted to reference a very interesting article: Potential of deep learning segmentation for the extraction of archaeological features from historical map series. [1] The authors demonstrate a working process to recognize map text and symbols using picterra.ch. Their technique and work with maps of Syria is of particular interest for my work on the CRANE-CCAD project. Thanks to Dr. Shawn Graham for sending me this.

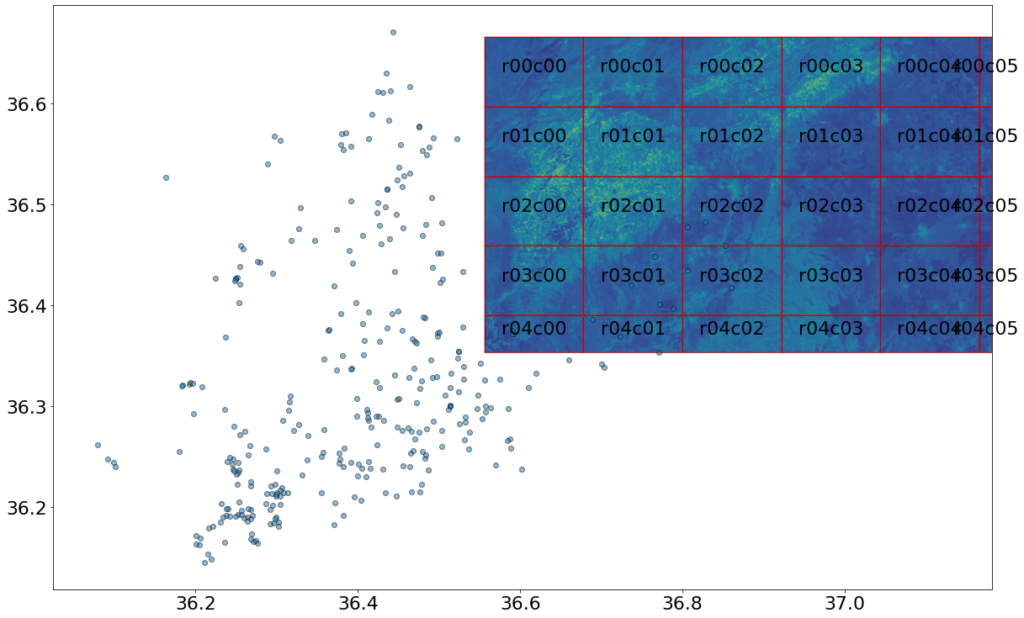

Below is a large geotiff that has points of interest plotted in it for possible analysis using deep learning. This file is nimadata1056734636.tif. At 17.45 mb in size, 5254 pixels wide and 3477 high, it’s ungainly. The program tiles the geotiff into smaller 1024 x 768 images, as shown by the red grid below.

The tiles are stored in a directory with file names in the format rXXcYY.tif, where rows start with 0 from the top and columns also start with 0 from the left. Below is the tif tile from row 3, column 2.

These uniform geotiff tiles can be used to train a deep learning model or with Azure Cognitive Services. A copy of the tiling program is here.

Thank you to CRANE and Dr. Stephen Batiuk for these images.

Reference:

[1] Garcia-Molsosa A, Orengo HA, Lawrence D, Philip G, Hopper K, Petrie CA. Potential of deep learning segmentation for the extraction of archaeological features from historical map series. Archaeological Prospection. 2021;1–13. https://doi.org/10.1002/arp.1807